Gradio's Journey to 1 Million Monthly Users!

Read More- Gradio Clients And Lite

- Fastapi App With The Gradio Client

Building a Web App with the Gradio Python Client

In this blog post, we will demonstrate how to use the gradio_client Python library, which enables developers to make requests to a Gradio app programmatically, by creating an end-to-end example web app using FastAPI. The web app we will be building is called "Acapellify," and it will allow users to upload video files as input and return a version of that video without instrumental music. It will also display a gallery of generated videos.

Prerequisites

Before we begin, make sure you are running Python 3.9 or later, and have the following libraries installed:

gradio_clientfastapiuvicorn

You can install these libraries from pip:

$ pip install gradio_client fastapi uvicornYou will also need to have ffmpeg installed. You can check to see if you already have ffmpeg by running in your terminal:

$ ffmpeg versionOtherwise, install ffmpeg by following these instructions.

Step 1: Write the Video Processing Function%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%20334.2%20361%20337.6%20346.7%20327.3C332.3%20317%20328.9%20297%20339.2%20282.7L340.3%20281.1C363.2%20249%20359.6%20205.1%20331.7%20177.2C300.3%20145.8%20249.2%20145.8%20217.7%20177.2L105.5%20289.5C73.99%20320.1%2073.99%20372%20105.5%20403.5C133.3%20431.4%20177.3%20435%20209.3%20412.1L210.9%20410.1C225.3%20400.7%20245.3%20404%20255.5%20418.4C265.8%20432.8%20262.5%20452.8%20248.1%20463.1L246.5%20464.2C188.1%20505.3%20110.2%20498.7%2060.21%20448.8C3.741%20392.3%203.741%20300.7%2060.21%20244.3L172.5%20131.1zM467.5%20380C411%20436.5%20319.5%20436.5%20263%20380C213%20330%20206.5%20251.2%20247.6%20193.7L248.7%20192.1C258.1%20177.8%20278.1%20174.4%20293.3%20184.7C307.7%20194.1%20311.1%20214.1%20300.8%20229.3L299.7%20230.9C276.8%20262.1%20280.4%20306.9%20308.3%20334.8C339.7%20366.2%20390.8%20366.2%20422.3%20334.8L534.5%20222.5C566%20191%20566%20139.1%20534.5%20108.5C506.7%2080.63%20462.7%2076.99%20430.7%2099.9L429.1%20101C414.7%20111.3%20394.7%20107.1%20384.5%2093.58C374.2%2079.2%20377.5%2059.21%20391.9%2048.94L393.5%2047.82C451%206.731%20529.8%2013.25%20579.8%2063.24C636.3%20119.7%20636.3%20211.3%20579.8%20267.7L467.5%20380z'/%3e%3c/svg%3e)

Let's start with what seems like the most complex bit -- using machine learning to remove the music from a video.

Luckily for us, there's an existing Space we can use to make this process easier: https://huggingface.co/spaces/abidlabs/music-separation. This Space takes an audio file and produces two separate audio files: one with the instrumental music and one with all other sounds in the original clip. Perfect to use with our client!

Open a new Python file, say main.py, and start by importing the Client class from gradio_client and connecting it to this Space:

from gradio_client import Client, handle_file

client = Client("abidlabs/music-separation")

def acapellify(audio_path):

result = client.predict(handle_file(audio_path), api_name="/predict")

return result[0]That's all the code that's needed -- notice that the API endpoints returns two audio files (one without the music, and one with just the music) in a list, and so we just return the first element of the list.

Note: since this is a public Space, there might be other users using this Space as well, which might result in a slow experience. You can duplicate this Space with your own Hugging Face token and create a private Space that only you have will have access to and bypass the queue. To do that, simply replace the first two lines above with:

from gradio_client import Client

client = Client.duplicate("abidlabs/music-separation", hf_token=YOUR_HF_TOKEN)Everything else remains the same!

Now, of course, we are working with video files, so we first need to extract the audio from the video files. For this, we will be using the ffmpeg library, which does a lot of heavy lifting when it comes to working with audio and video files. The most common way to use ffmpeg is through the command line, which we'll call via Python's subprocess module:

Our video processing workflow will consist of three steps:

- First, we start by taking in a video filepath and extracting the audio using

ffmpeg. - Then, we pass in the audio file through the

acapellify()function above. - Finally, we combine the new audio with the original video to produce a final acapellified video.

Here's the complete code in Python, which you can add to your main.py file:

import subprocess

def process_video(video_path):

old_audio = os.path.basename(video_path).split(".")[0] + ".m4a"

subprocess.run(['ffmpeg', '-y', '-i', video_path, '-vn', '-acodec', 'copy', old_audio])

new_audio = acapellify(old_audio)

new_video = f"acap_{video_path}"

subprocess.call(['ffmpeg', '-y', '-i', video_path, '-i', new_audio, '-map', '0:v', '-map', '1:a', '-c:v', 'copy', '-c:a', 'aac', '-strict', 'experimental', f"static/{new_video}"])

return new_videoYou can read up on ffmpeg documentation if you'd like to understand all of the command line parameters, as they are beyond the scope of this tutorial.

Step 2: Create a FastAPI app (Backend Routes)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%20334.2%20361%20337.6%20346.7%20327.3C332.3%20317%20328.9%20297%20339.2%20282.7L340.3%20281.1C363.2%20249%20359.6%20205.1%20331.7%20177.2C300.3%20145.8%20249.2%20145.8%20217.7%20177.2L105.5%20289.5C73.99%20320.1%2073.99%20372%20105.5%20403.5C133.3%20431.4%20177.3%20435%20209.3%20412.1L210.9%20410.1C225.3%20400.7%20245.3%20404%20255.5%20418.4C265.8%20432.8%20262.5%20452.8%20248.1%20463.1L246.5%20464.2C188.1%20505.3%20110.2%20498.7%2060.21%20448.8C3.741%20392.3%203.741%20300.7%2060.21%20244.3L172.5%20131.1zM467.5%20380C411%20436.5%20319.5%20436.5%20263%20380C213%20330%20206.5%20251.2%20247.6%20193.7L248.7%20192.1C258.1%20177.8%20278.1%20174.4%20293.3%20184.7C307.7%20194.1%20311.1%20214.1%20300.8%20229.3L299.7%20230.9C276.8%20262.1%20280.4%20306.9%20308.3%20334.8C339.7%20366.2%20390.8%20366.2%20422.3%20334.8L534.5%20222.5C566%20191%20566%20139.1%20534.5%20108.5C506.7%2080.63%20462.7%2076.99%20430.7%2099.9L429.1%20101C414.7%20111.3%20394.7%20107.1%20384.5%2093.58C374.2%2079.2%20377.5%2059.21%20391.9%2048.94L393.5%2047.82C451%206.731%20529.8%2013.25%20579.8%2063.24C636.3%20119.7%20636.3%20211.3%20579.8%20267.7L467.5%20380z'/%3e%3c/svg%3e)

Next up, we'll create a simple FastAPI app. If you haven't used FastAPI before, check out the great FastAPI docs. Otherwise, this basic template, which we add to main.py, will look pretty familiar:

import os

from fastapi import FastAPI, File, UploadFile, Request

from fastapi.responses import HTMLResponse, RedirectResponse

from fastapi.staticfiles import StaticFiles

from fastapi.templating import Jinja2Templates

app = FastAPI()

os.makedirs("static", exist_ok=True)

app.mount("/static", StaticFiles(directory="static"), name="static")

templates = Jinja2Templates(directory="templates")

videos = []

@app.get("/", response_class=HTMLResponse)

async def home(request: Request):

return templates.TemplateResponse(

"home.html", {"request": request, "videos": videos})

@app.post("/uploadvideo/")

async def upload_video(video: UploadFile = File(...)):

video_path = video.filename

with open(video_path, "wb+") as fp:

fp.write(video.file.read())

new_video = process_video(video.filename)

videos.append(new_video)

return RedirectResponse(url='/', status_code=303)In this example, the FastAPI app has two routes: / and /uploadvideo/.

The / route returns an HTML template that displays a gallery of all uploaded videos.

The /uploadvideo/ route accepts a POST request with an UploadFile object, which represents the uploaded video file. The video file is "acapellified" via the process_video() method, and the output video is stored in a list which stores all of the uploaded videos in memory.

Note that this is a very basic example and if this were a production app, you will need to add more logic to handle file storage, user authentication, and security considerations.

Step 3: Create a FastAPI app (Frontend Template)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%20334.2%20361%20337.6%20346.7%20327.3C332.3%20317%20328.9%20297%20339.2%20282.7L340.3%20281.1C363.2%20249%20359.6%20205.1%20331.7%20177.2C300.3%20145.8%20249.2%20145.8%20217.7%20177.2L105.5%20289.5C73.99%20320.1%2073.99%20372%20105.5%20403.5C133.3%20431.4%20177.3%20435%20209.3%20412.1L210.9%20410.1C225.3%20400.7%20245.3%20404%20255.5%20418.4C265.8%20432.8%20262.5%20452.8%20248.1%20463.1L246.5%20464.2C188.1%20505.3%20110.2%20498.7%2060.21%20448.8C3.741%20392.3%203.741%20300.7%2060.21%20244.3L172.5%20131.1zM467.5%20380C411%20436.5%20319.5%20436.5%20263%20380C213%20330%20206.5%20251.2%20247.6%20193.7L248.7%20192.1C258.1%20177.8%20278.1%20174.4%20293.3%20184.7C307.7%20194.1%20311.1%20214.1%20300.8%20229.3L299.7%20230.9C276.8%20262.1%20280.4%20306.9%20308.3%20334.8C339.7%20366.2%20390.8%20366.2%20422.3%20334.8L534.5%20222.5C566%20191%20566%20139.1%20534.5%20108.5C506.7%2080.63%20462.7%2076.99%20430.7%2099.9L429.1%20101C414.7%20111.3%20394.7%20107.1%20384.5%2093.58C374.2%2079.2%20377.5%2059.21%20391.9%2048.94L393.5%2047.82C451%206.731%20529.8%2013.25%20579.8%2063.24C636.3%20119.7%20636.3%20211.3%20579.8%20267.7L467.5%20380z'/%3e%3c/svg%3e)

Finally, we create the frontend of our web application. First, we create a folder called templates in the same directory as main.py. We then create a template, home.html inside the templates folder. Here is the resulting file structure:

├── main.py

├── templates

│ └── home.htmlWrite the following as the contents of home.html:

<!DOCTYPE html> <html> <head> <title>Video Gallery</title>

<style> body { font-family: sans-serif; margin: 0; padding: 0;

background-color: #f5f5f5; } h1 { text-align: center; margin-top: 30px;

margin-bottom: 20px; } .gallery { display: flex; flex-wrap: wrap;

justify-content: center; gap: 20px; padding: 20px; } .video { border: 2px solid

#ccc; box-shadow: 0px 0px 10px rgba(0, 0, 0, 0.2); border-radius: 5px; overflow:

hidden; width: 300px; margin-bottom: 20px; } .video video { width: 100%; height:

200px; } .video p { text-align: center; margin: 10px 0; } form { margin-top:

20px; text-align: center; } input[type="file"] { display: none; } .upload-btn {

display: inline-block; background-color: #3498db; color: #fff; padding: 10px

20px; font-size: 16px; border: none; border-radius: 5px; cursor: pointer; }

.upload-btn:hover { background-color: #2980b9; } .file-name { margin-left: 10px;

} </style> </head> <body> <h1>Video Gallery</h1> {% if videos %}

<div class="gallery"> {% for video in videos %} <div class="video">

<video controls> <source src="{{ url_for('static', path=video) }}"

type="video/mp4"> Your browser does not support the video tag. </video>

<p>{{ video }}</p> </div> {% endfor %} </div> {% else %} <p>No

videos uploaded yet.</p> {% endif %} <form action="/uploadvideo/"

method="post" enctype="multipart/form-data"> <label for="video-upload"

class="upload-btn">Choose video file</label> <input type="file"

name="video" id="video-upload"> <span class="file-name"></span> <button

type="submit" class="upload-btn">Upload</button> </form> <script> //

Display selected file name in the form const fileUpload =

document.getElementById("video-upload"); const fileName =

document.querySelector(".file-name"); fileUpload.addEventListener("change", (e)

=> { fileName.textContent = e.target.files[0].name; }); </script> </body>

</html>Step 4: Run your FastAPI app%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%20334.2%20361%20337.6%20346.7%20327.3C332.3%20317%20328.9%20297%20339.2%20282.7L340.3%20281.1C363.2%20249%20359.6%20205.1%20331.7%20177.2C300.3%20145.8%20249.2%20145.8%20217.7%20177.2L105.5%20289.5C73.99%20320.1%2073.99%20372%20105.5%20403.5C133.3%20431.4%20177.3%20435%20209.3%20412.1L210.9%20410.1C225.3%20400.7%20245.3%20404%20255.5%20418.4C265.8%20432.8%20262.5%20452.8%20248.1%20463.1L246.5%20464.2C188.1%20505.3%20110.2%20498.7%2060.21%20448.8C3.741%20392.3%203.741%20300.7%2060.21%20244.3L172.5%20131.1zM467.5%20380C411%20436.5%20319.5%20436.5%20263%20380C213%20330%20206.5%20251.2%20247.6%20193.7L248.7%20192.1C258.1%20177.8%20278.1%20174.4%20293.3%20184.7C307.7%20194.1%20311.1%20214.1%20300.8%20229.3L299.7%20230.9C276.8%20262.1%20280.4%20306.9%20308.3%20334.8C339.7%20366.2%20390.8%20366.2%20422.3%20334.8L534.5%20222.5C566%20191%20566%20139.1%20534.5%20108.5C506.7%2080.63%20462.7%2076.99%20430.7%2099.9L429.1%20101C414.7%20111.3%20394.7%20107.1%20384.5%2093.58C374.2%2079.2%20377.5%2059.21%20391.9%2048.94L393.5%2047.82C451%206.731%20529.8%2013.25%20579.8%2063.24C636.3%20119.7%20636.3%20211.3%20579.8%20267.7L467.5%20380z'/%3e%3c/svg%3e)

Finally, we are ready to run our FastAPI app, powered by the Gradio Python Client!

Open up a terminal and navigate to the directory containing main.py. Then run the following command in the terminal:

$ uvicorn main:appYou should see an output that looks like this:

Loaded as API: https://abidlabs-music-separation.hf.space ✔

INFO: Started server process [1360]

INFO: Waiting for application startup.

INFO: Application startup complete.

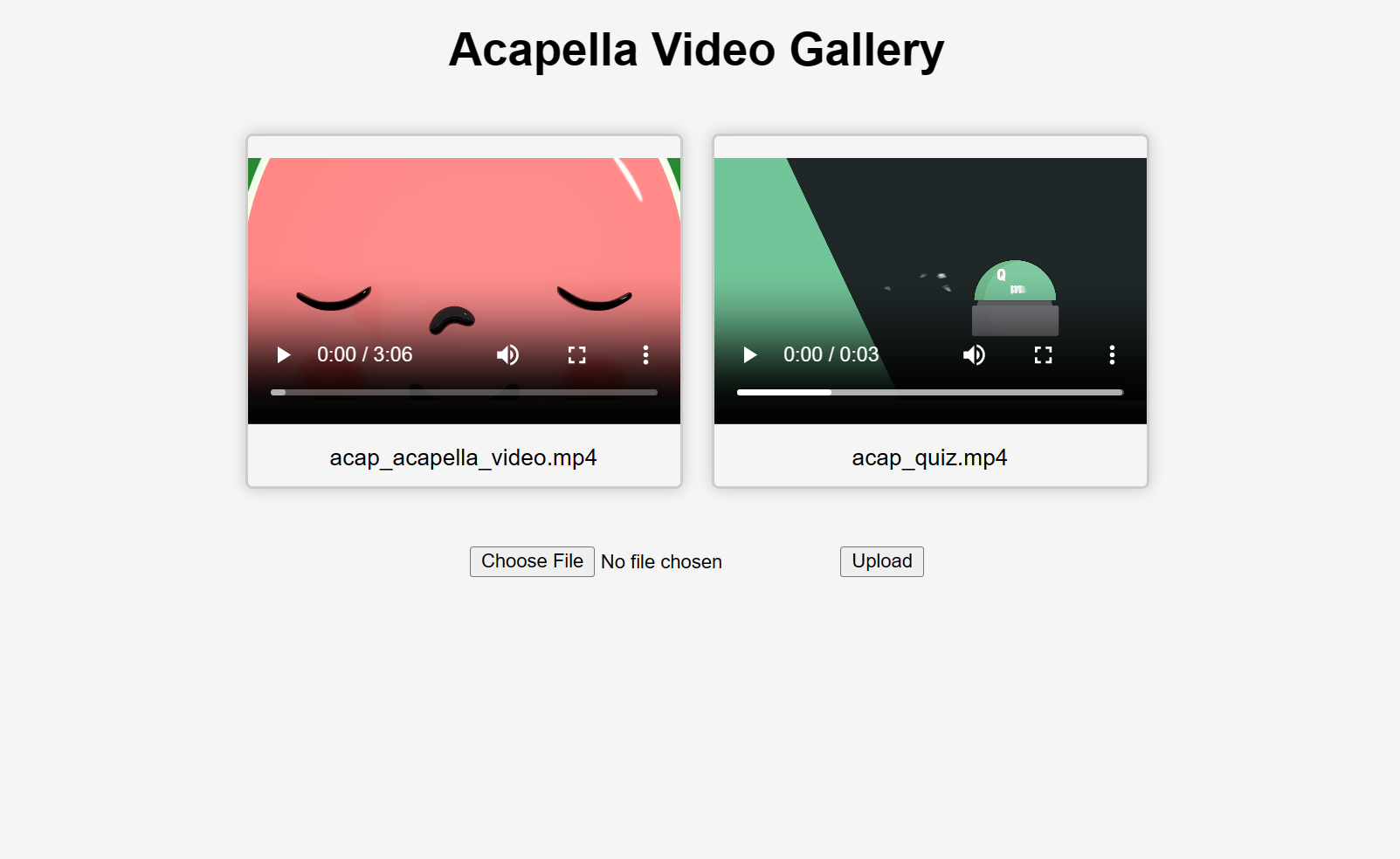

INFO: Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)And that's it! Start uploading videos and you'll get some "acapellified" videos in response (might take seconds to minutes to process depending on the length of your videos). Here's how the UI looks after uploading two videos:

If you'd like to learn more about how to use the Gradio Python Client in your projects, read the dedicated Guide.